Klaviyo Agencies: Unlocking 2026 Predictive Personalization Secrets

Personalization is no longer about knowing. It’s about predicting.

A customer has not clicked, not browsed, not searched, and yet the system already leans toward what they will want next. That used to feel like magic.

In 2026, it will feel like engineering.

Personalization has moved from reactive to anticipatory. It is probabilistic and real-time. Where teams once stitched segments from past behavior, the next generation will surface offers based on likely intent.

That means anticipating a reorder before the cart fills, surfacing the right cross-sell while a first purchase is still warming, and quieting promotions for a customer on the edge of churn.

Most brands still personalize by looking backward.

The winners in 2026 will be those who look forward.

A Klaviyo marketing agency that masters predictive personalization will stop chasing clicks and start orchestrating choices before the click even happens.

Let’s cut to the chase.

Why traditional personalization is reaching its limits

—————————–

Here are three reasons why traditional personalization can no longer cut the deal.

1. Segments are static in a dynamic world

Rule-based segments were brilliant for their time. They are easy to understand and audit. But they update slowly, rely on fixed criteria, and flatten individual nuance into groups.

In today’s world of multi-session shoppers and shifting intent, a static cohort rarely maps to a moment of purchase readiness.

2. Behavior-based personalization is always late

Most personalization waits for someone to do something, then reacts. After the click. After the browse. After the abandonment.

By the time the rule fires, the buying moment has often passed. Reactive systems can salvage revenue, but they rarely create it.

3. Modern buyers move faster than rules can adapt

Customers jump across devices and channels in a single decision cycle. Micro-moments and short sessions outpace manual updates.

Key point: when personalization reacts, the moment is gone.

Predictive approaches put your message in front of intent before it hardens into action.

What predictive personalization really means in 2026

—————————–

From rules to probabilities

Predictive personalization moves from binary rules to probability estimates: likelihood to purchase, likelihood to churn, likelihood to respond. This changes the unit of work from segments to scores and probabilities that update continuously.

From segments to individuals

No more “high intent group”. Instead, one model per customer, continuously estimating what nudges will move the needle for that person right now. Treat each profile as a moving target, not a bucket.

From automation to decisioning

Automation executes. Decisioning chooses.

Predictive personalization systems decide what to say, when to say it, where to send it, and which incentive will maximize long-term value, all without waiting on manual rules.

Core definition: predictive personalization uses AI to estimate future behavior and act before explicit signals appear.

Why Klaviyo is becoming a predictive personalization platform

—————————–

Here are three primary reasons (features) why Klaviyo is becoming a platform for predictive personalization.

1. Unified customer profiles as the foundation

Klaviyo’s value is its commerce-centric profile: orders, browsing, email and SMS behavior, returns, and support interactions.

When those signals live in one place, models can infer patterns across the whole customer lifecycle.

2. Real-time event streaming

Predictive work needs events as they happen. In-session triggers and micro-moment detection allow a system to update propensity scores mid-session and serve interventions before the customer drifts away.

3. Built-in AI and data science capabilities

Klaviyo has layered predictive models into core flows: churn likelihood, next purchase probability, and engagement scoring.

That makes it feasible for agencies to build lightweight decisioning systems without reinventing the entire stack.

Positioning: Klaviyo is evolving from an automation tool into a practical, commerce-first decisioning engine.

The role of Klaviyo agencies in this shift

—————————–

Why is predictive personalization not plug-and-play?

Prediction requires more than a checkbox. You need data modeling, careful signal selection, governance to avoid harmful interventions, and content architecture that supports many variations. You also need measurement frameworks that validate decision quality, not just open rates.

Agencies as architects, not implementers

Top agencies design prediction frameworks and personalization logic. They build modular creative, map signal flows, and document decision policies. They do not merely set up flows; they design systems that learn.

The new agency value proposition

The agency of 2026 sells intelligence engineering. From campaign execution to revenue orchestration, the deliverable becomes repeatable decision systems that compound value over time.

Now, let’s see what powers personalization in the coming times.

The predictive signals that power 2026 personalization

—————————–

Here are four predictive signals that boost personalization in 2026.

1. Behavioral velocity

Not just actions, but speed and acceleration: a sudden rise in product page views, shortened path times, rapid add-to-cart behavior.

Velocity is an early sign of intent.

2. Intent clustering

Patterns of repeated topic focus and cross-category movement reveal developing interests. Clusters form a probabilistic profile of what a customer is leaning toward next.

3. Micro-friction signals

Hesitation, partial scrolls, returning to the same item across sessions, these micro-friction signals often predict churn or readiness more reliably than a single click.

4. Contextual signals

Time of day, device, session depth, and referral source shape intent. A purchase at midnight on mobile has different implications than a midday desktop session.

Key insight: the loudest actions are not always the most predictive. Subtle patterns matter more than raw volume.

How Klaviyo agencies build predictive personalization systems

—————————–

Here is a 4-step strategy through which Klavioy agencies build predictive personalization systems.

Step:1 Define business outcomes first

Be explicit. Ask yourself: Are we optimizing conversion, retention, expansion, or reactivation? Every model and decision policy should map to a clear business outcome and an evaluation method.

Step 2: Select the right prediction targets

Choose targets that move the business: purchase likelihood, churn probability, product affinity. Focus on a few high-impact predictions before expanding.

Step 3: Design modular personalization blocks

Create interchangeable content modules that can be recombined by decision logic: variable hero blocks, offer modules, product carousels, and CTA variants. Modularity reduces creative overhead and keeps personalization scalable.

Step 4: Orchestrate across channels

Predictive decisions must act across email, SMS, on-site, and paid retargeting. A single decision should pick channel and timing to maximize impact while protecting reputation and frequency caps.

Now, let’s discuss how predictive personalization can be helpful in 2026 for your business.

Use cases defining predictive personalization in 2026

—————————–

Here are some real-life examples where predictive personalization can be a winning move in 2026.

1. Predictive abandonment prevention

A model detects rising abandonment probability mid-session and triggers an anticipatory message or personalized incentive before the cart empties. Intervention timing is chosen by probability, not a fixed timeout.

2. Anticipatory replenishment campaigns

Predict the reorder window and contact customers before they notice depletion. This increases convenience and reduces dependence on promotional nudges.

3. Churn risk suppression

When churn likelihood rises, the system suppresses broad discounts and instead triggers tailored recovery sequences designed to re-engage customers rather than condition them to promotions.

4. Cross-sell before the first purchase ends

Next-best-product logic suggests complementary items during the initial purchase window, improving average order value and creating a better first-order experience.

These systems do not react; they anticipate, nudge, and adapt in real time.

Now, let’s see how you can measure the success of your predictive personalization efforts in 2026.

Measurement in a predictive personalization world

—————————–

From attribution to anticipation

Measure lift versus control, prediction accuracy, and decision quality. The question is less “which email send drove this conversion” and more “did our decision increase the probability of the desired outcome?”

New KPIs that matter

- Conversion probability lift

- Time-to-purchase reduction

- Churn risk delta

These metrics quantify whether predictions are improving business outcomes, not just clicks.

Why A/B testing evolves

Testing becomes testing of models and policies, not just creative variants. You test which decision strategies produce better long-term revenue, and you iterate on the policies that govern actions.

That was the good part. Now, let’s see what problems you may face.

Common mistakes agencies and brands will make

—————————–

Here are three common mistakes most agencies and brands can make.

1. Treating prediction as a feature instead of a system

Prediction is not a button you toggle. It is an architecture that requires data pipelines, model validation, and ongoing governance.

2. Over-automating without governance

Black-box decisions erode trust. If customers receive seemingly random incentives or messages, you risk brand and legal problems.

3. Ignoring content architecture

Models fail without flexible, modular creativity. Prediction only works when content can be adapted quickly, safely, and at scale.

But then again, the partner you choose plays a vital role in the success of your personalization campaigns. So, let’s see how to find the best fit Klaviyo agency on the first attempt.

How to choose the right Klaviyo agency for 2026

—————————–

Before you partner with a Klaviyo agency, here are a few things you must do without failing.

1. Look beyond certifications

Certifications are table stakes. Ask about data modeling, prediction frameworks, and decision logic.

2. Ask these key questions

- How do you design predictive journeys?

- How do you validate models and measure decision quality?

- How do you govern automated decisions to protect brand and lifetime value?

3. Find red flags (if any)

- Overpromising full certainty

- No measurement framework for prediction quality

- No explainability or auditability for decisions

Pick partners who treat personalization as engineering, not just marketing.

Wrapping up

That brings us to the business end of this article, where it’s fair to say that the best personalization will happen before the click

Personalization is moving from reactive systems that respond to behavior to predictive systems that anticipate intent.

The shift is not incremental. It changes what it means to engage a customer: fewer messages, fewer guesses, and more precise decisions.

- From segments to systems.

- From campaigns to continuous decisioning.

- From chasing signals to shaping outcomes.

In 2026, personalization will not wait for behavior. It will anticipate it.

And the agencies that win will not send more messages.

They will make fewer, smarter decisions.

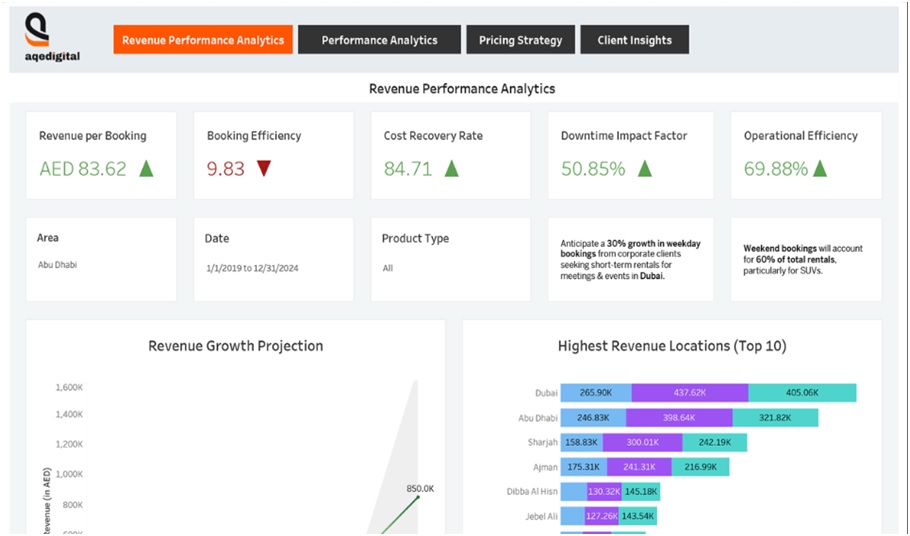

AQe Digital’s AI-powered dashboards unify real-time maintenance, production, and asset health data into a single operational view.

AQe Digital’s AI-powered dashboards unify real-time maintenance, production, and asset health data into a single operational view.

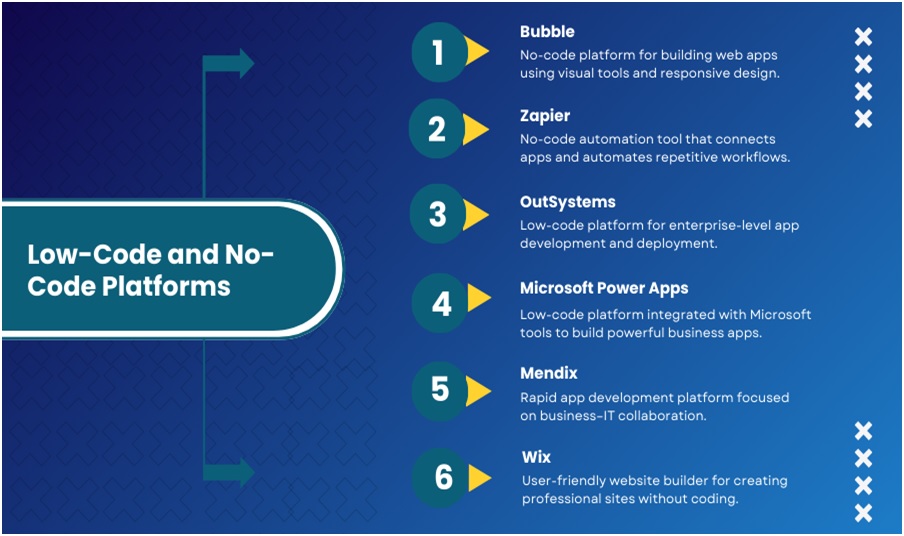

Organizations are spoiled for choices when it comes to low-code and no-code platforms available, each with their own unique features:

Organizations are spoiled for choices when it comes to low-code and no-code platforms available, each with their own unique features: